Llama-2-Chat which is optimized for dialogue has shown similar performance to popular closed-source models like ChatGPT and PaLM. We will fine-tune the Llama-2 7B Chat model in this guide Steer the Fine-tune with Prompt Engineering When it comes to fine. LLaMA 20 was released last week setting the benchmark for the best open source OS language model Heres a guide on how you can. Open Foundation and Fine-Tuned Chat Models In this work we develop and release Llama 2 a collection of pretrained and fine-tuned. For LLaMA 2 the answer is yes This is one of its attributes that makes it significant While the exact license is Metas own and not one of the..

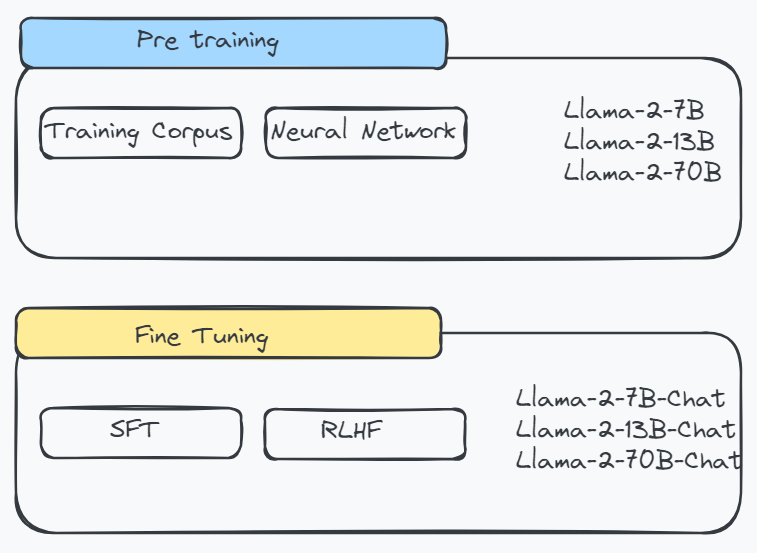

Chat with Llama 2 70B Customize Llamas personality by clicking the settings button I can explain concepts write poems and code solve logic puzzles or even name your. Llama 2 is a collection of pretrained and fine-tuned generative text models ranging in scale from 7 billion to 70 billion parameters This is the repository for the 13B pretrained model converted for. Llama 2 7B13B are now available in Web LLM Try it out in our chat demo Llama 2 70B is also supported If you have a Apple Silicon Mac with 64GB or more memory you can follow the instructions below. Experience the power of Llama 2 the second-generation Large Language Model by Meta Choose from three model sizes pre-trained on 2 trillion tokens and fine-tuned with over a million human. Getting started with Llama 2 Create a conda environment with pytorch and additional dependencies Download the desired model from hf either using git-lfs or using the llama download script..

Description This repo contains GGUF format model files for Meta Llama 2s Llama 2 7B Chat About GGUF GGUF is a new format introduced by the. GGUF is a new format introduced by the llamacpp team on August 21st 2023 It is a replacement for GGML which is no longer supported by llamacpp. 18 Train Deploy Use in Transformers main Llama-2-7B-Chat-GGUF 2 contributors History 62 commits TheBloke hmailhot Fix typo in. Error - TheBlokeLlama-2-7b-Chat-GGUF on CPU Using Llamacpp for GGUFGGML quantized models Issue 652 PromtEngineerlocalGPT GitHub. Coupled with the release of Llama models and parameter-efficient techniques to fine-tune them LoRA QLoRA this created a rich ecosystem..

Medium balanced quality - prefer using Q4_K_M. Llama 2 7B - GGUF Model creator Description This repo contains GGUF format model files for Metas Llama 2 7B. Medium balanced quality - prefer using Q4_K_M. Llama 2 encompasses a range of generative text models both pretrained and fine-tuned with sizes from 7 billion to 70 billion parameters. Llama 2 7b is swift but lacks depth making it suitable for basic tasks like summaries or categorization Llama 2 13b strikes a balance..

Tidak ada komentar :

Posting Komentar